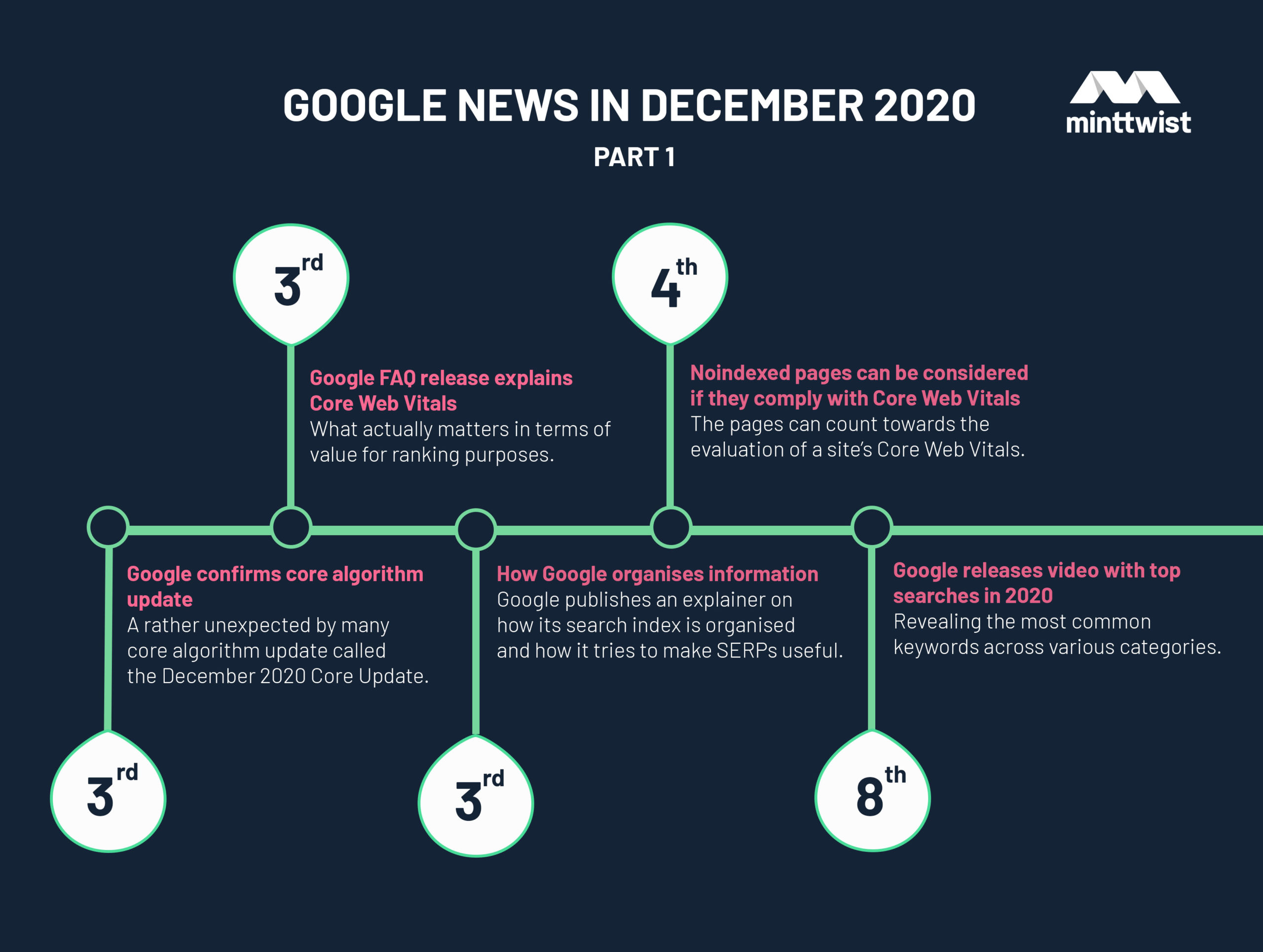

Roundup of Google updates from December 2020

The end of 2020 has finally come, so we are diving head first into the highlights and trends this December as we patiently wait for a fresh start in 2021.

The long-awaited last month of 2020 has finally come whilst people were longing for this year to end hoping for a fresh start and a pandemic-free brave new world in 2021.

As festive as this December could be, it came with an early “present” and a new broad core algorithm update which Google officially confirmed on 3rd December. It was a rather peculiar core update for the time it happened leaving mixed feelings to SEO specialists about its effect on the search results.

Search experts reflected on what the core update could really mean and shared useful insights as to how the new changes could shape the future of search.

Should we investigate the effects of core updates only through the traditional lens of E-A-T, user experience, and backlink quality? Or maybe consider inconspicuous signals from AI and natural language processing technology?

Google also released a Core Web Vitals FAQ so people get a better understanding of the looming major ranking factor that kicks off in May 2021 and will score webpage user experience.

Lastly, Google released the top searches of 2020 report on most trending topics which is an invaluable source of inspiration for content ideas in 2021. Be certain, content is still king!

3rd Dec: Google FAQ release explains Core Web Vitals

Google publishes an FAQ providing answers on how the Core Web Vitals work and what actually matters in terms of value for ranking purposes.

Question: Is Google recommending that all my pages hit these thresholds? What’s the benefit?

As we discussed in our November’s Google Roundup, the main idea behind CWV is to have a set of specific metrics available to everyone in order to improve the user experience of webpages as well as raise awareness of its importance.

Answer:

We recommend that websites use these three thresholds as a guidepost for optimal user experience across all pages. Core Web Vitals thresholds are assessed at the per-page level, and you might find that some pages are above and others below these thresholds.

Google

The immediate benefit will be a better experience for users that visit your site, but in the long-term, we believe that working towards a shared set of user experience metrics and thresholds across all websites, will be critical in order to sustain a healthy web ecosystem.

Question: Do Core Web Vitals impact ranking?

Google went crystal clear confirming that the Core Web Vitals will become a ranking signal in May, 2021.

Starting May 2021, Core Web vitals will be included in page experience signals together with existing search signals including mobile-friendliness, safe-browsing, HTTPS-security, and intrusive interstitial guidelines.

Google

Qustion: How does Google determine which pages are affected by the assessment of Page Experience and usage as a ranking signal?

The answer to this and other similar questions made us think that there is some sort of hierarchy in the importance of ranking signals. Search intent related signals seem to be prioritised over user experience signals.

Answer:

Page experience is just one of many signals that are used to rank pages. Keep in mind that the intent of the search query is still a very strong signal, so a page with a subpar page experience may still rank highly if it has great, relevant content.

Google

Question: What can site owners expect to happen to their traffic if they don’t hit Core Web Vitals performance metrics?

Answer:

It’s difficult to make any kind of general prediction. We may have more to share in the future when we formally announce the changes are coming into effect. Keep in mind that the content itself and its match to the kind of information a user is seeking remains a very strong signal as well.

Google

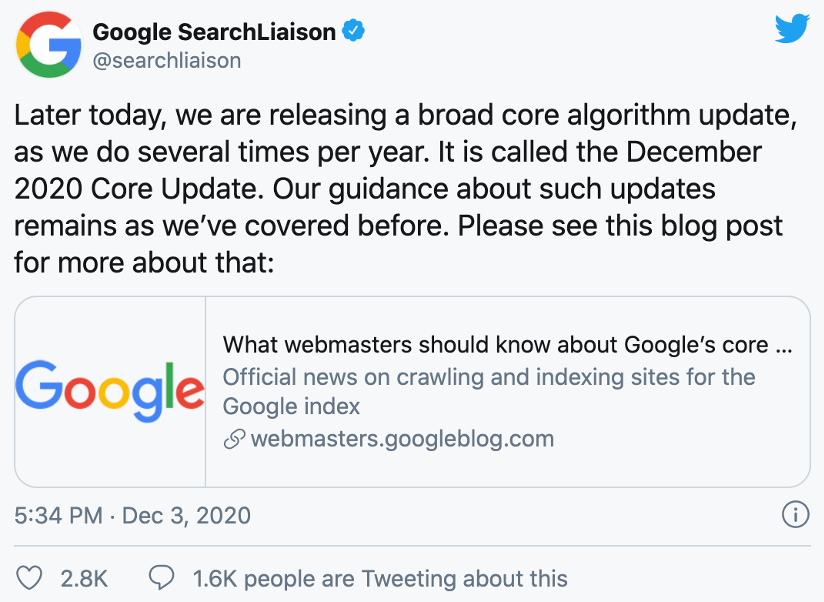

3rd Dec: Google confirms a broad core algorithm update

Google confirms a rather unexpected by many core algorithm update called the December 2020 Core Update.

The December news came after the search community long anticipating an update since the last one in May 2020. Given the average time between these types of updates, the December update took quite a while to come and many believed there wouldn’t be another update in 2020.

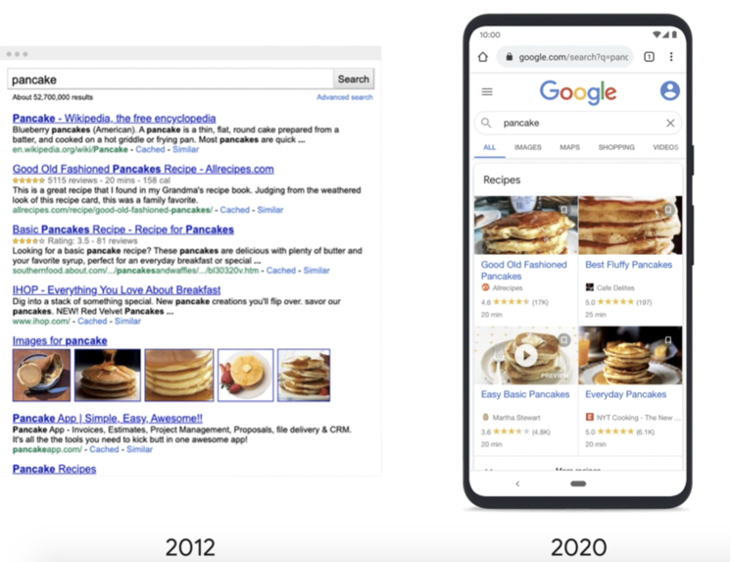

3rd Dec: How Google organises information to find what you’re looking for

Google publishes an explainer on how its search index is organised and how it tries to make SERPs useful. Google focuses on organising the way information shows in search results in a way that it’s easy to scan and digest.

Today, Google’s biggest challenge is not only indexing everything on the web but presenting it to searchers in a way that is useful and accessible.

Organising information in rich and helpful features

Back in the day, Google’s search indexing was updated once a month but now it’s updated virtually all the time. The vast volume of information made it imperative for Google to deploy richer and more digestible SERP formats that can be scanned and digested easier and, in turn, faster.

When looking for jobs, you often want to see a list of specific roles. Whereas if you’re looking for a restaurant, seeing a map can help you easily find a spot nearby.

Google

This is a range of features designed to make search results easier to scan, such as:

- Video and news carousels

- Rich imagery for recipe results

- Star labels for reviews

- Knowledge panels for people, companies, and events

More good news is that Google grew the outbound links from 10 to 26 on mobile SERPs allowing websites more chances to show on the first page.

Organising by ranking search results

There would be no better way for Google to organise information in terms of usefulness and relevance than ranking it in search results.

To make all of this information truly useful, we also must order, or “rank,” results in a way that ensures the most helpful and reliable information rises to the top.

Google

Our ranking systems consider a number of factors–from what words appear on the page, to how fresh the content is–to determine what results are most relevant and helpful for a given query.”

Google also highlighted the importance of the search intent behind some queries. When a single answer can be provided to a query it is always preferred. But direct answers are not suitable to all queries – this is when search intent comes into play.

Google provided a very good example:

Take a query like “pizza”–you might be looking for restaurants nearby, delivery options, pizza recipes, and more…

Google

Ranking a pizza recipe first would certainly be relevant, but our systems have learned that people searching for “pizza” are more likely to be looking for restaurants, so we’re likely to show a map with local restaurants first.

Google wrapped up by making clear that ranking is dynamic. The information and the meaning of the queries are changing “day-by-day”. Google is constantly reviewing the quality of search results to ensure that it still provides the most relevant information.

4th Dec: Noindexed pages can be considered if they comply with Core Web Vitals

Google’s John Mueller confirms that noindexed pages can count towards the evaluation of a site’s Core Web Vitals.

In plain words, sometimes webmasters exclude pages from Google’s search results for their own reasons aka SEO. Now, Google will use these noindexed pages to evaluate how Core Web Vitals impact search rankings.

As one would expect, the topic attracted much of site owners and SEOs attention and sparked a discussion in that Friday Google Search Central episode.

The question asked to Mueller was made off Google’s practice to measure Core Web Vitals of a website based on a group of pages. The question asked was what are the criteria Google uses to choose this group of pages?

Core Web Vitals will count for noindexed pages and things blocked in robots.txt. It’s quite interesting because obviously Search Console is aggregated, you get a group of pages… How do you understand that this is a group of these that are noindexed and you’ve not got the context? Or is it just based on URL path.

John Mueller, Google

Mueller also discussed why noindexed pages are considered for Core Web Vitals evaluation.

Mueller explained that since noindexed pages are still available to users, they should be considered for the Core Web Vitals. (Just a quick reminder) Core Web Vitals are essentially the factors that evaluate the webpage user experience. So, what Mueller suggests sounds fairly reasonable.

My general feeling is [noindexed pages are] something that’s also part of your website. So if you use some extra functionality, and that’s noindexed, people still see it as part of your website and say this website is slow, or this website is fast kind of thing.

John Mueller, Google

The next questions followed was if pages can be excluded from Web Core Vitals evaluation.

Question: Is there any way to ensure that particular pages are not being used to assess how well a site passes Core web Vitals?

I don’t have any great answers for you at the moment. Maybe. I don’t know what the information out there is on grouping at the moment, so it’s really hard for me to say exactly what you’d need to watch out for or what you can ignore.

John Mueller, Google

In general, when it comes to grouping across websites, when we try to do grouping we try to do that on the one hand by URL pattern, and on the other hand by the content of the site…So if you have parts of your website that you want to be seen as belonging together then I would definitely make sure from a URL pattern point of view it’s clear these belong together.

So if you’re specifically worried about Search pages, for example, then putting those in a folder with /search/ makes it a little bit easier for us to understand all of these search pages belong together, all of these product pages belong together, and all of these blog posts belong together. We might be able to treat them individually when it comes to Core Web Vitals.

The last part of Mueller’s response is a good formula to cope with your noindexex pages being considered for Core Web Vitals evaluation. Make sure to use a folder to group your pages based on URL and content to tell Google which pages should be considered as a group. This way you can keep Googlebot away from randomly considering URLs from your site for CWV evaluation.

8th Dec: Google releases video with top searches in 2020

Google releases its top trend search annual report, revealing the most common keywords across various categories.

2020 was definitely a year that generated questions. People turned to Google to ask “why” more than ever.

Recipe related searches and questions like “what day is it?” showed record highs. As expected, coronavirus related searches made a big chunk of people’s queries across the globe.

Google’s report is based on data taken from trending topics on search, news, virtual events, how-to’s, and many more.

The following are the highlights from the top trending searches in the USA, the UK and the rest of the world:

USA

Overall top searches

- Election results

- Coronavirus

- Kobe Bryant

- Coronavirus update

- Coronavirus symptoms

Top “how to make” searches

- How to make hand sanitizer?

- How to make a face mask with fabric?

- How to make whipped coffee?

- How to make a mask with a bandana?

- How to make a mask without sewing?

Top “during coronavirus” searches

- Best stocks to buy during coronavirus

- Dating during coronavirus

- Dentist open during coronavirus

- Unemployment during coronavirus

- Jobs hiring during coronavirus

Top “why?” searches

- Why were chainsaws invented?

- Why is there a coin shortage?

- Why was George Floyd arrested?

- Why is Nevada taking so long?

- Why is TikTok getting banned?

UK

Overall top searches

- Coronavirus

- US election

- Caroline Flack

- Coronavirus symptoms

- Coronavirus update

Top “how to make” searches

- How to make a face mask?

- How to make hand sanitizer?

- How to make bread?

- How to get tested for coronavirus?

- How to cut your own hair?

Top questions

- Who won the election?

- Where does vanilla flavouring come from?

- How many cases of coronavirus in the UK?

- What is VE Day?

- How did coronavirus start?

Worldwide

Top overall searches

- Coronavirus

- Election results

- Kobe Bryant

- Zoom

- IPL

Top recipe searches

- Dalgona coffee

- Ekmek

- Sourdough bread

- Pizza

- Lahmacun

Top movie searches

- Parasite

- 1917

- Black Panther

- 365 Dni

- Contagion

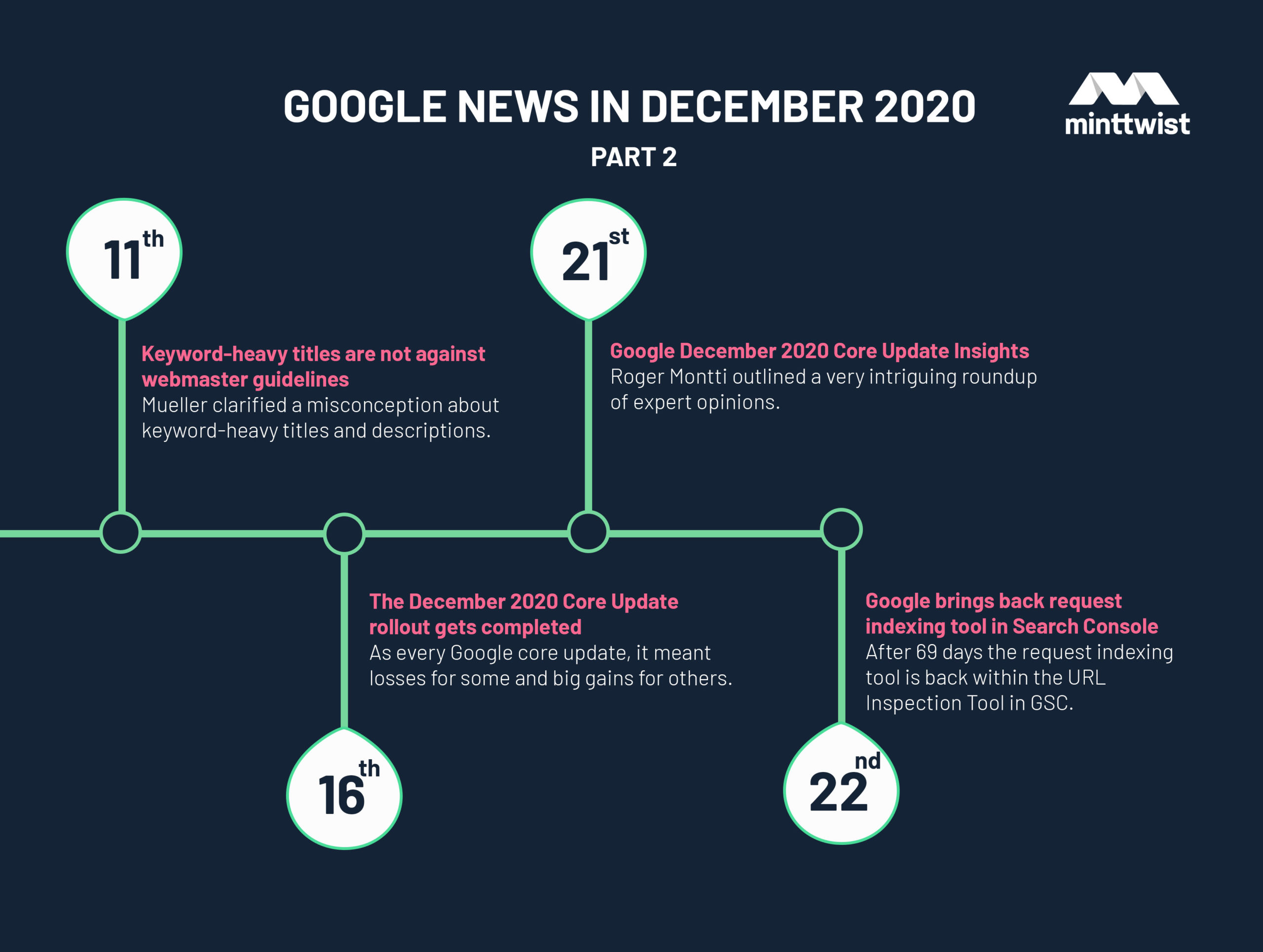

11th Dec: Google “keyword-heavy titles not against our guidelines”

Mueller confirmed that keyword-heavy metadata is not against webmaster guidelines.

On another insightful Google Search Central session, Mueller clarified a misconception about keyword-heavy titles and descriptions, making clear that it is a normal practice that’s not against webmaster guidelines.

An SEO specialist said to Mueller that he sees many businesses keyword-stuffing their titles and descriptions although advised not to, and on top of that they very often rank really well. The SEO specialist went on giving the example of a Brighton-based flowers business that stuffed their description with keywords like “wedding flowers Brighton, funeral flowers Brighton, anniversary flowers Brighton, birthday flowers Brighton.”

Mueller was eventually asked why these keyword ridden titles and descriptions are ranking high in search results and whether this is against Google’s webmaster guidelines.

Mueller gave a fairly straightforward answer saying that a page’s meta title and description with keywords, is not against Google’s guidelines. Google doesn’t think this is a problem.

This doesn’t mean that Google fosters this practice, as it can sometimes confuse Google in understanding what the page is all about.

Mueller recommended always focus on writing better meta tags to improve click-through rates (CTRs) and not expect to improve rankings.

It’s not against our webmaster guidelines. It’s not something that we would say is problematic. I think, at most, it’s something where you could improve things if you had a better fitting title because we understand the relevance a little bit better.

John Mueller, Google

And I suspect the biggest improvement with a title in that regard there is if you can create a title that matches what the user is actually looking for then it’s a little bit easier for them to actually click on a search result because they think “oh this really matches what I was looking for.

So that’s something where I almost think it’s a matter of improving the click-through rate rather than improving the ranking. And if with the same ranking, you get a higher click-through rate because people recognize your site as being more relevant then that’s kind of a good thing.

16th Dec: The December 2020 Core Update rollout gets completed

As it typically happens, this update rolled out for about two weeks and on 16th December, Google confirmed that the December core update is complete.

As with every Google core update, it meant losses for some and big gains for others. It was a rather atypical update in the way it rolled out though. We saw volatility spikes on 4th December, six rather flat days followed, and on 10th December the second wave kicked in.

21st Dec: Google December 2020 Core Update insights

In his article in Search Engine Journal, Roger Montti outlined a very intriguing roundup of expert opinions on what the December core update could actually mean including his, according to us, spot-on viewpoint.

In my opinion, Google updates have increasingly been less about ranking factors and more about improving how queries and web pages are understood.

Roger Montti, author at Search Engine Journal

Montti does not share the opinion that Google is randomising search results in order to fool the ones who try to deconstruct Google’s algorithm.

He suggests that it’s difficult to identify certain algorithm features such as BERT or Neural Matching to explain search results. Montti says that it’s way easier to consider things like backlinks, E-A-T, or user experience to try explaining the reasons a site is ranking or not even when the actual reason is things like implemented AI technology.

So, it makes sense that the Search Engine Results Pages (SERPs) seem to be confusing when you look at it through the traditional old school lens when the reason that web page rankings have drastically changed over the years is because of Google’s AI technologies.

The December 2020 Core Update was a unique one to watch roll out. Many sites we work with started with losses and ended with wins, and vice-versa. So clearly it had something to do with a signal or signals that cascade.

Dave Davies (@oohloo)

If we think about the timing, and how it ties to the rolling out of passage indexing and that it’s a Core Update, I suspect it ties to content interpretation systems and not links or signals along those lines.

...I believe it has to do with future features and capabilities, but I’ve been around long enough to know I could be wrong, and I need to watch closely.

Dave Davies from Beanstalk Internet supported similar positions (to Roger Montti’s) and that what Google said was coming might contribute to the SERPs fluctuations.

This one seems to be tricky. I’m finding gains and losses. I would need to wait more for this one.

Steven Kang (@SEOSignalsLab)

Steven Kang from SEO Signals Lab Facebook group suggests more time is needed to draw some conclusions as this update seems to be a more unusual one.

High level, Google is adjusting things that have a global impact in core updates.

Cristoph Cemper (@cemper)

a) Weight ratios for different types of links, and their signals

I think the NoFollow 2.0 rollout from Sept 2019 is not completed, but tweaked. I.e. how much power for which NoFollow in which context.

b) Answer boxes, a lot more. Google increases its own real estate

c) Mass devaluation of PBN link networks and quite obvious footprints of “outreach link building. Just because someone sent an outreach email doesn’t make a paid link more natural, even if it was paid with “content” or “exchange of services.”

Cristoph Cemper arrayed a set of factors that he believes have been impacted by the latest update.

The standpoints on what is the fallout of the last Google core update seem to vary. But what opinions appear to converge on is that no typically obvious changes are easily distinguishable this time.

22nd Dec: Google brings back request indexing tool in Search Console

With a Twitter announcement Google confirms the request indexing tool is back on Google Search Console.

After 69 days on disabled mode the request indexing tool is back within the URL Inspection Tool in GSC. The big comeback was expected to come before the holiday shopping season in Christmas and indeed it arrived just in time for Christmas and the new year.

Google also reminded us:

Reminders!

Google

1. If you have large numbers of URLs, you should submit a sitemap instead of requesting indexing via Search Console.

2. Requesting indexing does not guarantee inclusion to the Google index – our systems prioritize the fast inclusion of high quality, useful content.

Other than that, the comeback means the world of SEOs and site owners who know pretty well how much they’ve missed this GSC capability. SEOs and webmasters know first-hand how important it is to be able to promptly request indexing of important URLs. No matter if these URLs are old with updated content or merely new that you want to get into the SERPs as soon as possible.

Need help with managing your SEO strategy? Get in touch with us today!

More insights from the team